There is a widely-held view that technology is far ahead of the policies that we rely upon to guide its use. But the converse is also true: the policies that enable nefarious use of tech are also far ahead of the technology itself. In other words, forward-looking policy views on intended uses are often ‘in the can’, just waiting for the enabling tech to become commercially viable. I call this the “policy paradox”, which is the idea that the lag between tech and policy goes in both directions: tech innovation is ahead of the policies we expect to catch up and protect us from harm, while it was policy that created the demand for the tech in the first place.

THE PARADOX

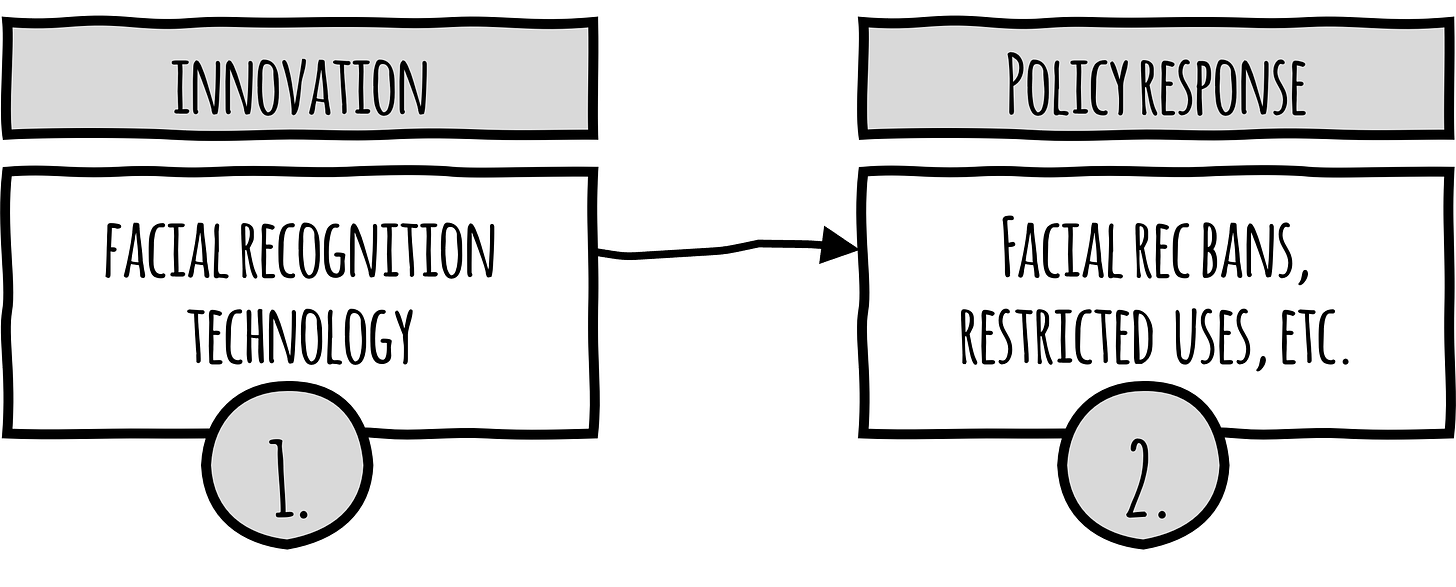

Tech industry watchers in the policy space often echo a consistent refrain: that the policy environment always lags behind tech innovation, and that the arrival of new tech always catches slow, bureaucratic policy and regulatory efforts flat-footed. In its most simplistic form, it’s a two-step process in which innovation demands a policy response (using facial recognition technology as an example):

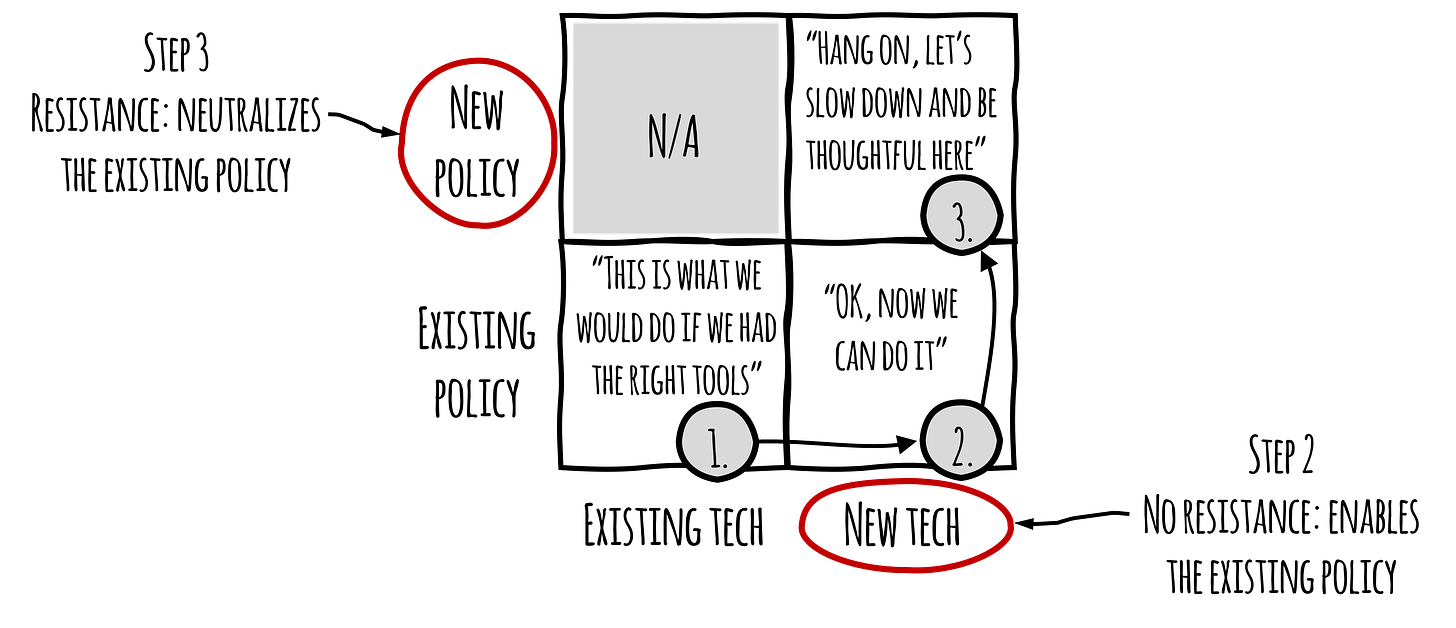

I have a different view. In many (perhaps most) cases, existing policy thinking reflects a future state in which the “right” or “better” technology to advance the policy goal simply hasn't been invented yet. From this perspective, the idea that policy lags tech is initially the other way around: it’s the tech that’s catching up to (in many cases, unpopular, unethical, or legally questionable) policy. Continuing with the facial recognition example, reality looks more like this, in which the thing we’re calling “innovation” is actually a “technology response” to an existing policy:

An example I've written about before is the Allied policy of “strategic bombing” in World War II, which was carried out for most of the war with conventional weapons for firebombing attacks. Then, in the summer of 1945, a new technology becomes available, and the policy envisioned years before simply continues, but now more efficiently. It’s true that the atomic bomb is also a canonical example of a new technology that created an immediate need for a lot of new policy, regulation, and law. But all of those things took place after the policy objective was achieved: bringing an end to the war during a time in which the 1907 Hague Conventions did not expressly prohibit bombardment of civilians. After the war, humanitarian law (finally) took into account the use of the aircraft in military conflict and recognized that the policy of firebombing cities (what we call “terror bombing” today) is a war crime.

In some sense, the atomic bomb story is an example of how things “should” work: an existing and ethically dubious policy makes use of new disruptive tech, but the new tech is so frightening and dangerous that it demands creation of new policy. This three-step process—(1) existing policy, which (2) creates demand for new tech, which (3) demands new policy—is the cycle we *should* be in today, but we are not. Why is it so hard to get to #3? Why can’t we have policy strategies that are, as Eisenstat and Gilman suggest, nimble enough to react to these technology disruptions? I think the answer is simple: it’s because often the people and institutions with the power to craft new policies would rather not undercut the old ones. In a great many cases, the scary, controversial technology riddled with ethical questions is actually working precisely as intended to advance a policy goal that pre-dated its invention.

There are many examples that are probably more suitable for a longer piece, but here are just a couple that illustrate the point.

NATSEC POLICY, SURVEILLANCE TECH

I was doing some research the other day on the Patriot Act and I’d forgotten about the breakneck speed with which it was introduced in Congress: one week after September 11, 2001. It passed on October 26, 2001, barely a month later. If you’re not familiar, it’s worth a lightweight refresher, but the gist of it is that it vastly expanded the government’s ability to conduct domestic and international surveillance. Do we really think congressional staffers started work on September 12th to pull this bill together? It’s more plausible to suggest its key provisions were defined in a policy document, shoved in a desk drawer, and simply dusted off, formatted as a bill, and put to the Congress for a vote. Since that time, a bevy of “innovation” in surveillance tech has hit the market to capitalize on what was sure to be a growing market, and indeed it was (and still is). Things got even more interesting in 2007 with the passage of an amendment to FISA called the Protect America Act that led to the NSA’s PRISM program, disclosed by Edward Snowden in 2013. It seems a lot like building upon circa-2001 policy while waiting for better and better tech to come along to make it more efficient & effective. The Patriot Act was extended three times before expiring in 2020. Meanwhile, law and policymakers have been talking about federal privacy legislation for 20 years, with nothing to show for it. Why? Why is it the government can produce the text of the Patriot Act in a week, pass it in a month, and extend it three times in addition to amending and reauthorizing FISA, while the federal privacy legislation can gets kicked down the road year after year? Maybe it’s because the existing policy that leverages this new disruptive tech is the policy of choice. Maybe it was the new tech chasing after the existing policy all along, and not the other way around.

FACIAL RECOGNITION TECHNOLOGY

In the tech policy and ethics world, few technologies are studied more than facial recognition. The history of facial rec research goes back a long way, beginning with CIA-funded research by Woody Bledsoe and his team at the Panoramic Research Institute in the 1960’s. The CIA’s involvement implies obvious linkage to Cold War counterespionage efforts, but the U.S. government “has continued actively developing facial recognition technology since this initial project, both through funding academic research in the area and building their own internal systems” (Stevens, Keyes, 2021). Because these efforts were often in partnership with academia and the private sector, more information about them became publicly known over time, personified by IBM’s work with MIT Media Lab and the Pentagon in the 1990’s that suggested a “wide range of potential uses” that included “automating police mugshot searches”. The history of this technology is vast, spanning both of the AI winters and numerous false starts that effectively ended with the arrival of deep learning. Over the course of the last decade, facial recognition technology has been the focus of AI ethicists, privacy advocates, civil society, and many others calling for a policy response to this “new” technology. The lineage of facial rec, however, suggests that the government’s policy position on use of this tech was firmly established decades ago, and that it was simply waiting for a commercially viable technology response to make it real.

A NEW FRAMING

We really need to move away from the default framing that asserts law, regulation, and policy must somehow “catch up” to today’s innovations, and instead recognize that law, regulation, and policy often reflect what powerful institutions envisioned as a future state, and how they just need to wait patiently for the right tools to come along. And when those tools to come along, the resistance to new policy to diminish its effect is what we see week in and week out, with congressional hearings, press releases, tweets, and cable news media hits. There is a reason for it.

In my view, this framing better explains why “do nothing” is not only very much in the consideration set of policy options, but often seems like the most attractive option. Enablement of existing policy with new tech is a lot like entitlements: once they’re there, they’re difficult to claw back. There are a lot of dynamics here: not wanting to diminish the free market’s incentive to continue innovating, not wanting to forfeit beneficial uses of a new technology, and of course corporate lobbying and money sloshing around in politics, which are separate topics altogether. We do, however, need to look at so-called “bad” technologies in a policy context to avoid letting the people off the hook, because people decide how these tools are used, and people stand between continued societal harm and regulatory safeguards. We know about most government policy positions today because, well, they’re mostly public. We need to use this knowledge to better anticipate what near-future tech will mean to society and start the process of regulatory safeguarding in advance.

Tech isn’t always waiting for new policy to regulate it. It’s often the policy that’s waiting for new tech, and once new tech lands in an existing policy context, that becomes the end state.

/end