Anthropic and the Pentagon

The standoff between Anthropic and the DoD is yet another example of a pre-existing government policy just waiting for the right tech to come along.

The big story in tech news this week is the debate between Anthropic and the US Department of Defense (DoD). The crux of the dispute is over Anthropic’s decision to deem autonomous weapons and domestic surveillance as unacceptable uses for Claude, it’s large language model (LLM) platform. The DoD wants unrestricted access to Claude, saying Anthropic need only comply with US law. The interesting bit about this standoff is that it doesn’t fit the pattern of the new tech coming along, followed by the government’s development of a policy stating how to use it. In this case, it seems like there’s already an existing policy in place, and that the government simply wants unrestricted access to the tech to make the policy real, in that order.

A few years ago I a wrote a piece entitled Policy Paradox to point out that despite conventional wisdom that says new tech begets new acceptable use policy, it’s quite often the case that the acceptable use policy is already, as they say in Hollywood, “in the can” and is simply waiting for capable tech to become available to make it the use case possible.

The atomic bomb is the canonical example of this pattern, as the US warfighting policy of firebombing cities and killing civilians was well-established throughout WW II, and was not prohibited by the 1907 Hague Conventions. By the time the A-bomb was developed in 1945, the US was simply looking for a better, more terrifying and decisive instrument with which to carry out a policy that had already been in force (and in practice) since 1942.

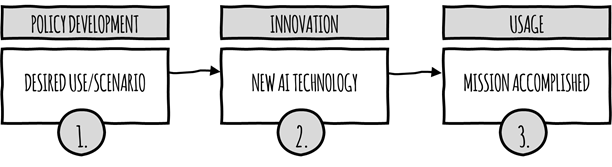

In the context of government use of modern tech (especially advanced AI), this is what most people think is going on:

There is an assumed order of operations: new tech, a resultant policy, and usage that is constrained by the policy intervention. We all know that policymakers don’t work that fast (take digital privacy, for example), but there is a certain comfort one takes in the thought that alarming technology is a catalyst for development of policy to mitigate risk.

But more often than not, here’s what’s really going on ...

If you need a more relevant historical example that’s grounded in AI, here’s one, mapped to the graphic above …

The CIA was lamenting “if only we had better tech” for decades while funding facial recognition research efforts at IBM, MIT, and elsewhere as part of its counterespionage efforts during the Cold War. This work started in the 1960’s, not long after machine intelligence was conceived as a legitimate field of study at the 1956 Dartmouth Workshop.

In the years that followed, AI was an ongoing source of disappointment until 2012, when AlexNet won the ImageNet competition. Deep learning had finally made AI commercially viable.

The rest is history. Facial recognition technology (FRT) is now everywhere when it comes to law enforcement, national security, counterterrorism, and of course immigration enforcement. It seems like FRT is finally, at long last, able to support the government’s long-desired scenarios and policy goals.

So here we are with Anthropic and the Pentagon. The government has already made up its mind about what it wants to do, and now the tech is finally viable. The historical context concerning autonomous warfare is centered on the well-known DoD Directive 3000.09, Autonomy in Weapons Systems, which effectively says, “We won’t use fully autonomous systems in warfare unless we have to, and then we will.” If you want to go deep, it’s a 20+-page policy document filled with conditions, caveats, ethical principles, and guidelines for commanders and operators describing scenarios under which such systems can and cannot be used.

Autonomous weapons are already in use and have been for quite a while. One example is the US Navy’s Phalanx close-in weapon system (CIWS) to defend military vessels from incoming threats such as antiship missiles for which human-in-the-loop reaction times would be woefully insufficient. There are other examples as well. For more depth, history, and background on autonomous weapons, I’d highly recommend Paul Scharre’s excellent book, Army of None (2018).

When it comes to AI in warfare, the use of autonomous systems for target identification, target acquisition, and attack initiation was always the desired scenario … the tech just wasn’t there yet. Now that it’s here, the Pentagon is essentially saying “It’s about time” and was not banking on a defense contractor taking responsibility for how its products are used. I give Anthropic a lot of credit for sticking to its guns, pardon the expression. The deadline for compliance offered by the DoD is the end of this week. I hope the company doesn’t cave, but as I’ve said often in various writings and posts, profit-seeking entities have a way of making sure shareholder interests rise to the top. I would love to be proven wrong here.

/end